The Incomplete Map: Why Materials Science Won't Have Its AlphaFold Moment

AlphaFold is a powerful interpolator, not an extrapolator. Savage's small vs large world framework explains why - and why the real prize in materials AI is building tools that know what they don't know.

In 2020, Google AlphaFold set a precedent for AI accelerated science, claiming to have saved hundreds of millions of years in research time by predicting protein folding. DeepMind had solved the 50-year-old "protein folding problem" by mapping amino acid sequence to 3D protein structure: this was no less than a new paradigm for AI sciences.

This week, I've heard prominent, experienced and successful VCs and investors pose a now-familiar question: "when will chemistry and materials have their AlphaFold moment?" They are actively inviting the sciences to raise their ambition for a general materials model.

But this framing misses one critical point: in the years since its deployment, AlphaFold has repeatedly struggled to accurately predict out-of-distribution, and has systematically overestimated its own confidence when doing so.

Before racing to replicate the successes of AlphaFold, we must ask ourselves - what kind of problem did it actually solve?

Small World Puzzles vs. Large World Mysteries

I have been reading Kay and King's Radical Uncertainty, and was struck by a framing of modelling problems that originated in the 1950s with the mathematician Leonard Savage.

Savage delineated between "Small World" and "Large World" problems. Small World problems possess a known set of outcomes and stationary logic that allows for reward optimization. These are "puzzles" where the right answer to be definitively confirmed - they might occur in the casino, or a well designed experiment.

Large World problems, have un-enumerated outcomes, potential non-stationarity and can trick the unwary into overconfidence. These are mysteries that do not allow for simple evaluation. These occur in life where we have infinite variations on actions available, and no clear sight of rewards.

Computers excel at solving small world problems, humans at large world problems. Or, as Hans Moravec put it,

it is comparatively easy to make computers exhibit adult level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility

The ChatGPT moment appeared miraculous because it revealed that much of human language operates as a small-world problem. A statistical model with fixed weights, embedding dimensions, and outputs can reproduce in-distribution text far beyond our expectations. The model did not suddenly solve large world mysteries; rather, it dramatically expanded the boundaries of what we consider to be small world amenable.

Do these conditions hold for material science?

Material Design is the Opposite Problem

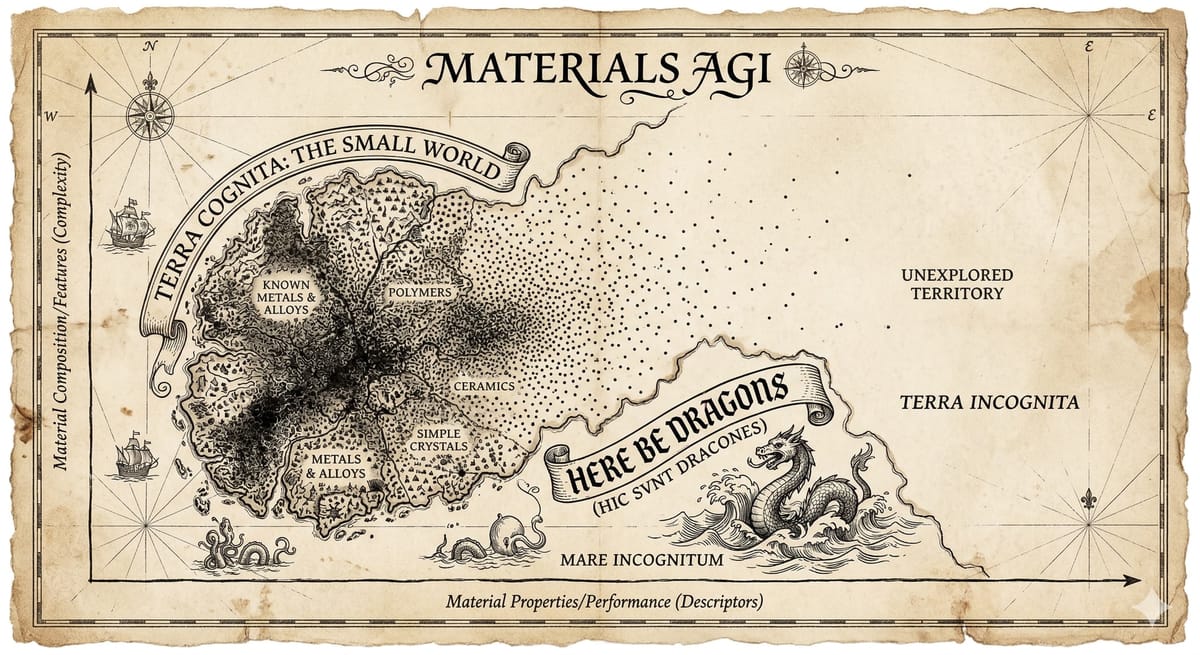

The holy grail of materials AI is perfect inverse design - we specify our functional needs, and the system provides the recipe for a perfect chemical or material. The requested recipe will either be already known, a trivial interpolation between known points, or entirely out-of-distribution. We hope for the latter; novelty is the purpose of materials discovery.

The parameter space for chemical and material design is vastly more complex than language. Not only do the atomic ingredients provide near-endless possible compounds, these are overlaid with structural and micro-structural variations, non-Markovian processing paths, and multi-scale interactions (including problems like protein folding).

Within this huge parameter space, our historical sampling is incredibly sparse. We also face a genuine risk of non-stationarity: because physical synthesis is highly sensitive, if experiments and processes are insufficiently instrumented, uncontrolled or unmeasured parameters (from equipment degradation to ambient conditions) can lead to shifting distributions.

AlphaFold is a powerful interpolator within densely sampled regions of structure space, with the capacity to hugely accelerate folding calculations close to known behaviours. Rather than seeking artificial general intelligence (AGI) for materials, short- to medium-term value can be found in asking: Which part of material parameter space are actually small-world enough to be tractable?

The Loop, not the Model

AI for materials will be economically productive, but primarily for optimization in high-sample density subsets of parameter space. We are already seeing major lab investments for high-temperature superconductor searches where domain-specific targets may return quick wins. In my own research, we are leveraging this to automate recipe design for laser optimization in notoriously challenging semiconductor growth. Automated labs are inherently small world - closed inputs and closed outputs.

Today, frontier LLM producers pay experts thousands to devise unseen problems. This is cheap compared to a single experimental verification for a material in the physical world. The economics of data generation in materials is categorically different from that of language.

These approaches are valuable, but are not materials AGI. Despite billions flowing into venture-backed neolabs, no static foundational model will extrapolate reliably across the full complexity of chemical and materials space.

But this is not an argument against ambition.

The true value of general models will not lie in direct, zero-shot prediction across the large world of materials. Instead, value will belong to systems that master uncertainty-prediction. A well-designed, uncertainty-aware model will guide expert scientists - and automated lab equipment - to direct their attention towards spaces where little is currently known.

This will be a collaborator in experimental design, embodying the current state of knowledge from centuries of scientific exploration to act as our incomplete map - and we can use this to navigate towards "here-be-dragons".

The Value of an Incomplete Map

An incomplete but honest map of materials provides a shortcut to serendipity. The question is not whether AI can "solve" materials science. It is whether we are building tools honest enough to show us exactly what they do not know.

With such a tool, we can close the discovery loop. By aligning the huge economic potential in materials AGI with the curiosity-driven approach of the world's best scientists, we can identify and reward high-value sampling in the physical world.

That is the workflow worth building. And navigating the large world of materials - through curiosity, economics, and an honest map - is the problem worth solving.