Evaluating the Incomparable: How to Judge a Portfolio of One

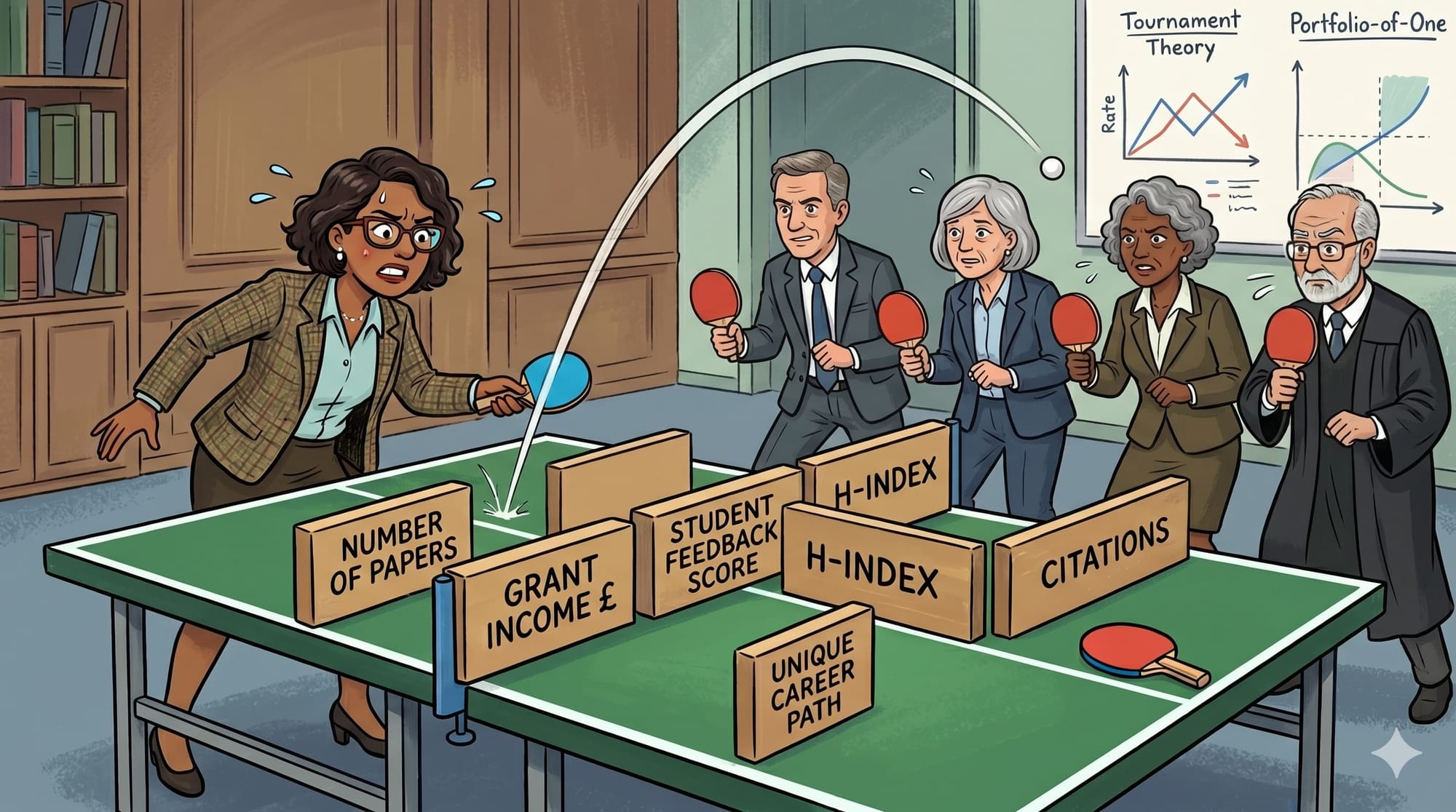

When evaluating a "portfolio-of-one," the attempt to use standard metrics isn't rigour, it’s category error. From promotions panels to VC offices, our obsession with defensible metric counting often masks the very potential we claim to be seeking.

A panel of experienced, thoughtful experts sit around a table, with detailed paperwork in front of them describing a unique person and their unique achievements. After a moment to collect their thoughts, the chair starts: "So... what do we think?"

This scene plays out on recruitment committees, venture-capital offices, fellowship assessments, and - for me this week - on promotions panels. The recruitment committee has a job description, the VC has a funding framework, and the panel has a rubric, each defining some objective and measurable criteria.

Despite the collective expertise in the room, the process is architecturally set up to fail.

Absolute Performance or Relative Tournament?

Panels want to reward behaviour that benefits the organization, or to select people who can further the organizational goals. If these behaviours were easy to measure, we would not need an expert committee; we would just use a spreadsheet.

Lazear and Rosen recognised that when a group cannot measure absolute effort - perhaps it is expensive, slow or inherently impossible to assess - they tend to measure relative performance. Even where this is not explicitly stated, it can become an implicit rule enforced through the pursuit of consistency and equity.

This is not necessarily a problem: tournament theory has been shown to be an optimal approach when effort cannot be measured, provided there is a known field of competitors and commensurable outputs. This requires homogeneity: two people performing essentially the same task.

In other words, it is generally easier to say who is better than to say what a performance is worth.

But at the extremes of seniority and specialisation - professors, founders, deep-tech pioneers - this model collapses. When we are assessing a "portfolio-of-one", the selection and use of comparable metrics is not rigour, it is systematic bias.

The Cost of Getting it Wrong

In 2025, only 32% of UK professors were female, despite comprising 49% of academic staff. A mere 5% of professors had a disability, compared with 11% of the wider academic pool. And just 1% identify as Black compared with 3.5% of all academics.

Ability is equally distributed, but reward is heavily skewed. This pattern of systematic under-representation is not noise - it is signal. The genuine question for leadership is whether the evaluation mechanism itself, regardless of intent, is incapable of recognising non-standard excellence.

The perception of selection as a tournament carries well-recognised risks: structural bias in self-selection, and incentivisation of rent-seeking behaviour. Upstream nomination - widening the pool - is a necessary but insufficient fix; a diverse pool entering a faulty evaluation process simply spreads the damage more equitably.

EDI is not only a moral obligation, but a clear diagnostic signal of a broken process; wherever rewards are disconnected from performance, an organization has lost its most important asset. By systematically under-supporting diverse experience and non-linear paths, we lose the breadth that correlates with high-performance innovation.

Comparing the Unmeasurable

The statistician Savage distinguished between Small World problems - with enumerable outcomes and stationary logic - that reward optimization, and Large World mysteries - with unenumerable outcomes - that defy overconfident modelling.

Small World outcomes fit neatly into boxes; a researcher generates income and produces papers, a teacher gets positive feedback, an innovator founds a spin-out. Such metrics can be counted. However, they create an illusion of measurement by discarding information that does not fit the model. Worse still, Small World stationarity assumes that what denoted success in the past will apply in the future. In an evolving innovation environment, this is intellectually naive.

In academia, the culture of counting is well recognized across the sector - Wilsdon likened it to a tide that washes away nuanced impacts in favour of proxy metrics.

In our portfolio-of-one, the Large World uniqueness of the individual - their career path, context, opportunity set - is rejected as noise. It is difficult to account for in a rubric, subjective to discuss, and often uncomfortable to discuss at a panel. Yet it is the very uniqueness of skills and capabilities that are being sought: Claudio Fernández-Aráoz argues that in high performance environments, the most critical attribute isn't competency, but potential.

This is not an argument for more modifiers to create equity. Each modifier may address a specific structural disadvantage, but unique careers are not decomposable in a set of adjustments.

The Noise of Consensus

The justification used for committee evaluation lies in the wisdom of crowds. For over a hundred years, the Galton "Ox Weight" experiment has stood for the ability of crowds to - on average - make better predictions than individuals. Unfortunately, in 2021 Kahneman's "Noise" described a more uncomfortable finding: committees that communicate don't reduce noise, they amplify it. Through no fault of the participants, effects such as anchoring, cascade effects, and social pressures towards false consensus combine to undermine the best intentions.

In short, committees tend to produce defensible decisions, not accurate ones. Tournaments, bench-marking and direct comparisons around unfair but objectively countable metrics provide a path of least resistance to consensus. The crowd is good at the Ox weight because it is Small World problem; it is bad at promotions because it is a Large World mystery.

This is not a failing of the individuals on the panels - it is a direct and predictable outcome of the committee mechanism of evaluation.

Starting with the Person: The Dyad Approach

What if, instead of starting with a preconceived rubric, we started with the person and work towards organisational need?

In "Loonshots", Safi Bahcall describes a mechanism used by McKinsey. They abandon the committee in favour of the dyad: two investigators, one domain expert and one generalist, as equal partners. This removes the noise of the committee, and creates a small and directly accountable unit. The expert provides contextual insight, the non-expert demands that significance be justified in plain terms.

Their role is to produce a consensus report, but before assessing what the candidate has done, they must produce an "opportunities map", understanding career interruptions, institutional support, funding environment, disciplinary norms, caring responsibilities. This foregrounding of EDI grounds decisions as epistemology, not as accommodation.

The dyad has the freedom to use both objective measures, and qualitative input - line-manager reports, stakeholder interviews, partnership evaluation and even direct discussion with the applicant. They can explore the Large World of impact and significance that a rubric misses.

Their report goes to a single decision-maker: a Dean, a Partner, a Hiring Lead. Where committee decisions are hidden by collective responsibility, the investigation by dyad and decision by principal provides a clear audit trail. The written report goes to the candidate regardless of outcome. A "no" becomes actionable feedback, not a closed door.

Beyond the structural benefits, the dyad method addresses a cognitive challenge in the committee room: data overwhelm. Panels often require members to review dozens of complex cases. Research on decision fatigue suggests that the conservatism of judgment degrades as the volume of decisions increases. The dyad method replaces "Parallel Skimming" with "Distributed Deep-Dives".

The Cost

The dyad system will sometimes produce the wrong answer. All systems do. There is no solution to the "finite time" ↔ "equity of access" ↔ "consistency of application" tension. The goal is not perfection - it is delivering a good process, that transparently reports the decision outcome and the basis for the decision, and that clearly defines accountability.

The dyad system is resource-intensive. But processes that embed transparency and accountability are non-negotiable for high-stakes decisions.

Minimum viable shifts to the system are possible:

- Ownership: Decisions should be signed and owned by a principal with written feedback

- Context First: Committee discussions should preface with a foreground opportunity map before discussing any metrics

- Actionable Feedback: The evaluation must go to the candidate, such that a "no" becomes a roadmap

Whenever we evaluate a portfolio-of-one, the evaluation itself must be bespoke. Standardisation is not fairness; it is often false precision that shoehorns Large World behaviours into Small World measurements.

As decision makers, we can never eliminate uncertainty. We must instead seek to be radically honest about the limits of our models. The first step is designing systems that prioritize context over consensus and accountability over defensibility.